Three Deepseek Secrets and techniques You By no means Knew

페이지 정보

Maybelle 작성일25-02-01 11:59본문

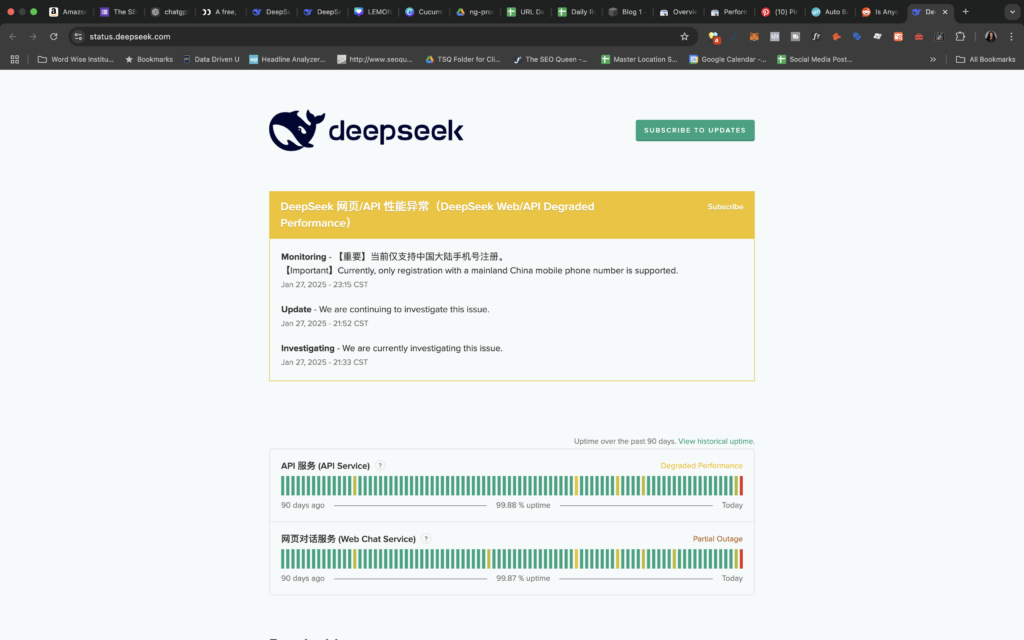

In solely two months, DeepSeek got here up with one thing new and interesting. ChatGPT and DeepSeek represent two distinct paths within the AI atmosphere; one prioritizes openness and accessibility, whereas the other focuses on performance and management. This self-hosted copilot leverages powerful language fashions to offer clever coding help whereas guaranteeing your knowledge stays secure and below your management. Self-hosted LLMs provide unparalleled advantages over their hosted counterparts. Both have spectacular benchmarks compared to their rivals but use significantly fewer resources because of the way the LLMs have been created. Despite being the smallest model with a capacity of 1.Three billion parameters, DeepSeek-Coder outperforms its bigger counterparts, StarCoder and CodeLlama, in these benchmarks. In addition they notice evidence of data contamination, as their model (and GPT-4) performs better on issues from July/August. DeepSeek helps organizations minimize these risks by in depth data analysis in deep web, darknet, and open sources, exposing indicators of legal or moral misconduct by entities or key figures related to them. There are at the moment open issues on GitHub with CodeGPT which may have fixed the issue now. Before we perceive and examine deepseeks performance, here’s a fast overview on how fashions are measured on code particular tasks. Conversely, OpenAI CEO Sam Altman welcomed DeepSeek to the AI race, stating "r1 is a formidable mannequin, significantly around what they’re in a position to ship for the price," in a current put up on X. "We will obviously deliver a lot better fashions and also it’s legit invigorating to have a brand new competitor!

It’s a very capable mannequin, however not one which sparks as a lot joy when utilizing it like Claude or with tremendous polished apps like ChatGPT, so I don’t expect to keep using it long term. But it’s very exhausting to compare Gemini versus GPT-4 versus Claude simply because we don’t know the architecture of any of those issues. On high of the efficient architecture of DeepSeek-V2, we pioneer an auxiliary-loss-free deepseek strategy for load balancing, which minimizes the efficiency degradation that arises from encouraging load balancing. A pure query arises regarding the acceptance fee of the additionally predicted token. DeepSeek-V2.5 excels in a variety of critical benchmarks, demonstrating its superiority in each natural language processing (NLP) and coding tasks. "the model is prompted to alternately describe a solution step in natural language and then execute that step with code". The model was trained on 2,788,000 H800 GPU hours at an estimated price of $5,576,000.

It’s a very capable mannequin, however not one which sparks as a lot joy when utilizing it like Claude or with tremendous polished apps like ChatGPT, so I don’t expect to keep using it long term. But it’s very exhausting to compare Gemini versus GPT-4 versus Claude simply because we don’t know the architecture of any of those issues. On high of the efficient architecture of DeepSeek-V2, we pioneer an auxiliary-loss-free deepseek strategy for load balancing, which minimizes the efficiency degradation that arises from encouraging load balancing. A pure query arises regarding the acceptance fee of the additionally predicted token. DeepSeek-V2.5 excels in a variety of critical benchmarks, demonstrating its superiority in each natural language processing (NLP) and coding tasks. "the model is prompted to alternately describe a solution step in natural language and then execute that step with code". The model was trained on 2,788,000 H800 GPU hours at an estimated price of $5,576,000.

This makes the mannequin sooner and more environment friendly. Also, with any lengthy tail search being catered to with greater than 98% accuracy, you may as well cater to any deep Seo for any form of key phrases. Can it be another manifestation of convergence? Giving it concrete examples, that it can observe. So a variety of open-source work is issues that you can get out rapidly that ge

댓글목록

등록된 댓글이 없습니다.